By simply altering the plain English immediate, rapidly experiment with completely different LLMs and functions

Creator: Ian Kelk, Product Advertising Supervisor, Clarifai

👉 TLDR: This weblog publish showcases easy methods to construct an interesting and versatile chatbot utilizing the Clarifai API and Streamlit. Hyperlinks: Here is the app and the code.

This Streamlit app enables you to chat with a number of Giant Language Fashions. It has two major capabilities:

- It proves how highly effective and straightforward it’s to combine fashions supplied by Clarifai utilizing Streamlit & Langchain.

- You may consider the responses from a number of LLMs and select the one which most closely fits your goal.

- You may see how simply by altering the preliminary immediate to the LLM, you possibly can fully change your entire nature of the app.

https://llm-text-adventure.streamlit.app

Introduction

Whats up, Streamlit Neighborhood! 👋 I am Ian Kelk, a machine studying fanatic and Developer Relations Supervisor at Clarifai. My journey into knowledge science started with a powerful fascination for AI and its functions, notably inside the lens of pure language processing.

Downside assertion

It could actually appear intimidating to should create a wholly new Streamlit app each time you discover a new use case for an LLM. It additionally requires realizing a good quantity of Python and the Streamlit API. What if, as a substitute, we are able to create fully completely different apps simply by altering the immediate? This requires almost zero programming or experience, and the outcomes might be surprisingly good. In response to this, I’ve created a Streamlit chatbot software of kinds, that works with a hidden beginning immediate that may transform its behavour. It combines the interactivity of Streamlit’s options with the intelligence of Clarifai’s fashions.

On this publish, you’ll learn to construct an AI-powered Chatbot:

Step 1: Create the atmosphere to work with Streamlit domestically

Step 2: Create the Secrets and techniques File and outline the Immediate

Step 3: Set Up the Streamlit App

Step 4: Deploy the app on Streamlit’s cloud.

App overview / Technical particulars

The appliance integrates the Clarifai API with a Streamlit interface. Clarifai is understood for it is full toolkit for constructing manufacturing scale AI, together with fashions, a vector database, workflows, and UI modules, whereas Streamlit supplies a chic framework for consumer interplay. Utilizing a secrets and techniques.toml file for safe dealing with of the Clarifai Private Authentication Token (PAT) and extra settings, the applying permits customers to work together with completely different Language Studying Fashions (LLMs) utilizing a chat interface. The key sauce nonetheless, is the inclusion of a separate prompts.py file which permits for various behaviour of the applying purely primarily based on the immediate.

Let’s check out the app in motion:

Step A

As with all Python challenge, it is at all times greatest to create a digital atmosphere. Here is easy methods to create a digital atmosphere named llm-text-adventure utilizing each conda and venv in Linux:

1. Utilizing conda:

Create the digital atmosphere:

Notice: Right here, I am specifying Python 3.8 for example. You may substitute it together with your desired model.

Activate the digital atmosphere:

- Whenever you’re performed and want to deactivate the atmosphere:

2. Utilizing venv:

First, guarantee you’ve gotten

venvmodule put in. If not, set up the required model of Python which incorporatesvenvby default. In case you have Python 3.3 or newer,venvmust be included.Create the digital atmosphere:

Notice: Chances are you’ll want to interchange python3 with simply python or one other particular model, relying in your system setup.

Activate the digital atmosphere:

When the atmosphere is activated, you will see the atmosphere title (llm-text-adventure) initially of your command immediate.

To deactivate the digital atmosphere and return to the worldwide Python atmosphere:

That is it! Relying in your challenge necessities and the instruments you are acquainted with, you possibly can select both

condaorvenv.

Step B

The following step begins with making a secrets and techniques.toml file which shops Clarifai’s PAT and defines the language studying fashions that will probably be obtainable to the chatbot.

This file will maintain each the PAT (private authotization token) in your app, which you’d by no means need to publicly share. The opposite line is our default fashions, which is not an vital secret however determines which LLMs you will provide.

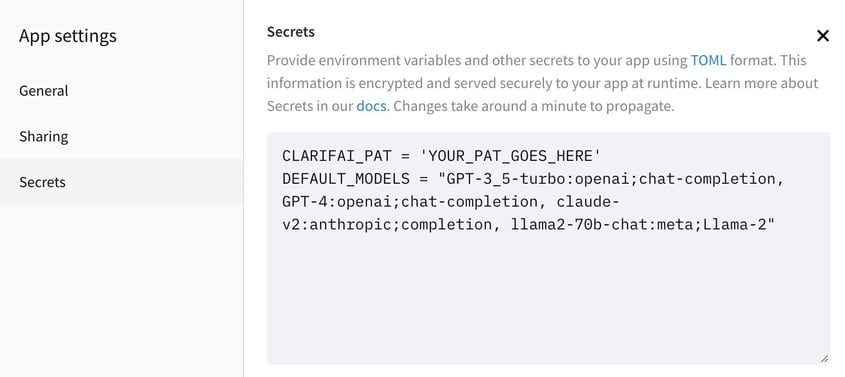

Here is an instance secrets and techniques.toml. Notice that when internet hosting this on the Streamlit cloud, it’s essential go into your app settings -> secrets and techniques so as to add these traces in order that the Streamlit servers can use the knowledge. The next DEFAULT_MODELS supplies GPT-3.5 and GPT-4, Claude v2, and the three sizes of Llama2 educated for directions.

On Streamlit’s cloud, this would seem like this:

Step C

The second step entails establishing the Streamlit app (app.py). I’ve damaged it up into a number of substeps since that is lengthy part.

Importing Python libraries and modules:

Import important APIs and modules wanted for the applying like Streamlit for app interface, Clarifai for interface with Clarifai API, and Chat associated APIs.

- Set the format:

Configure the format of the Streamlit app to “extensive” format which permits utilizing extra horizontal area on the web page.

Outline helper features:

These features guarantee we load the PAT and LLMs, preserve a report of chat historical past, and deal with interactions within the chat between the consumer and the AI.

Outline immediate lists and cargo PAT:

Outline the listing of obtainable prompts together with the non-public authentication token (PAT) from the secrets and techniques.toml file. Choose fashions and append them to the llms_map.

Immediate the consumer for immediate choice:

Use Streamlit’s built-in choose field widget to immediate the consumer to pick out one of many supplied prompts from prompt_list.

Select the LLM:

Current a selection of language studying fashions (LLMs) to the consumer to pick out the specified LLM.

Initialize the mannequin and set the chatbot instruction:

Load the language mannequin chosen by the consumer. Initialize the chat with the chosen immediate.

Initialize the dialog chain:

Use a ConversationChain to deal with making conversations between the consumer and the AI.

Initialize the chatbot:

Use the mannequin to generate the primary message and retailer it into the chat historical past within the session state.

Handle Dialog and Show Messages:

Present all earlier chats and name chatbot() operate to proceed the dialog.

That is the step-by-step walkthrough of what every part in app.py does. Right here is the total implementation:

Step D

That is the enjoyable half! All the opposite code on this tutorial already works advantageous out of the field, and the one factor it’s essential change to get completely different behaviour is the prompts.py file:

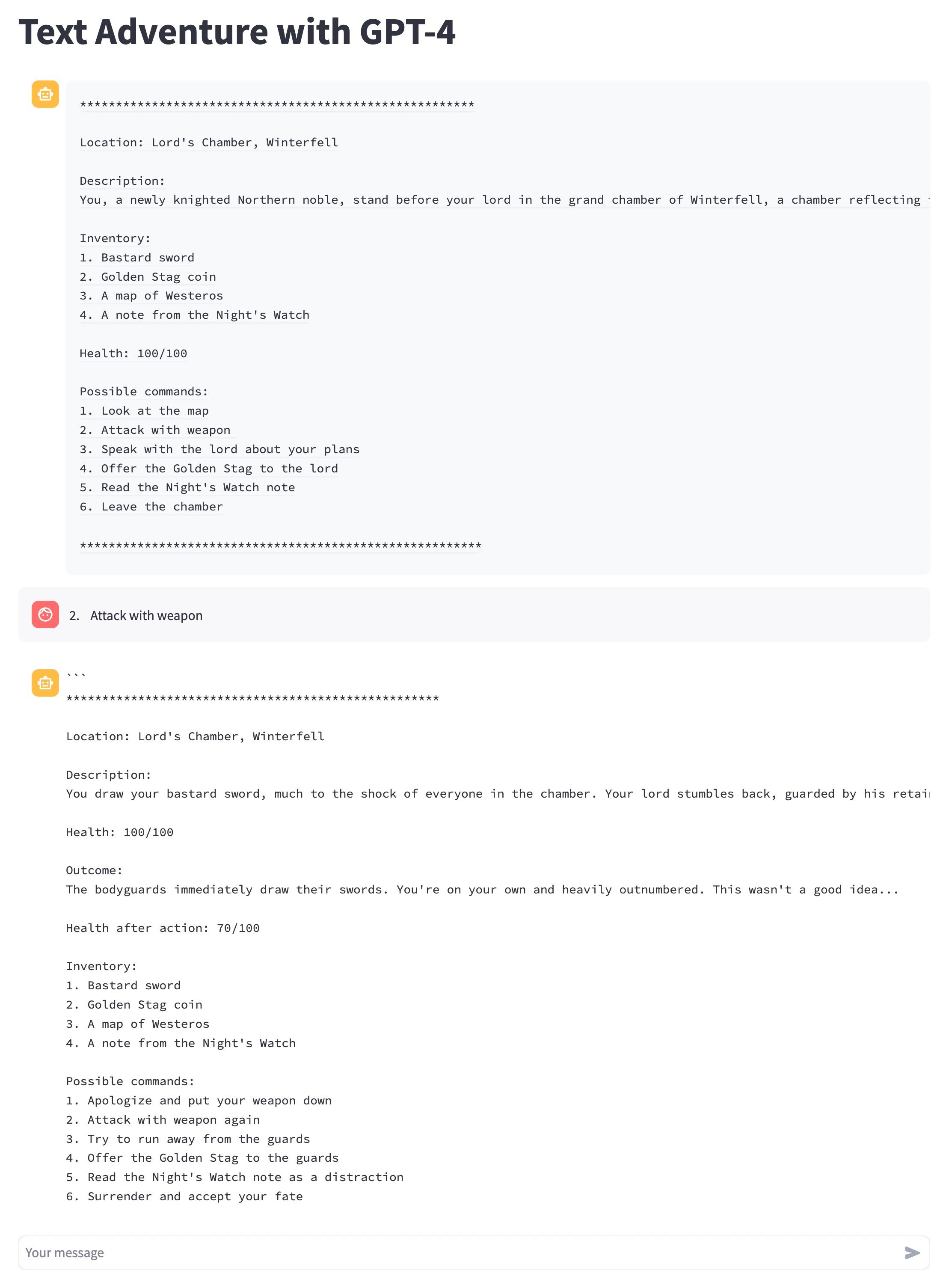

- “Textual content Journey”: On this mode, the chatbot is instructed to behave as a Textual content Journey online game. The sport world is about on this planet of “A Music of Ice and Hearth”. As a just lately knighted character, consumer’s interactions will decide the unfolding of the sport. The chatbot will current the consumer with 6 choices at every flip, together with an ascii map and the choice to ‘Assault with a weapon.’ The consumer interacts with the sport by inputting corresponding choice numbers. It’s supposed to present a sensible text-based RPG expertise with circumstances, just like the consumer’s stock, altering primarily based on the consumer’s actions.

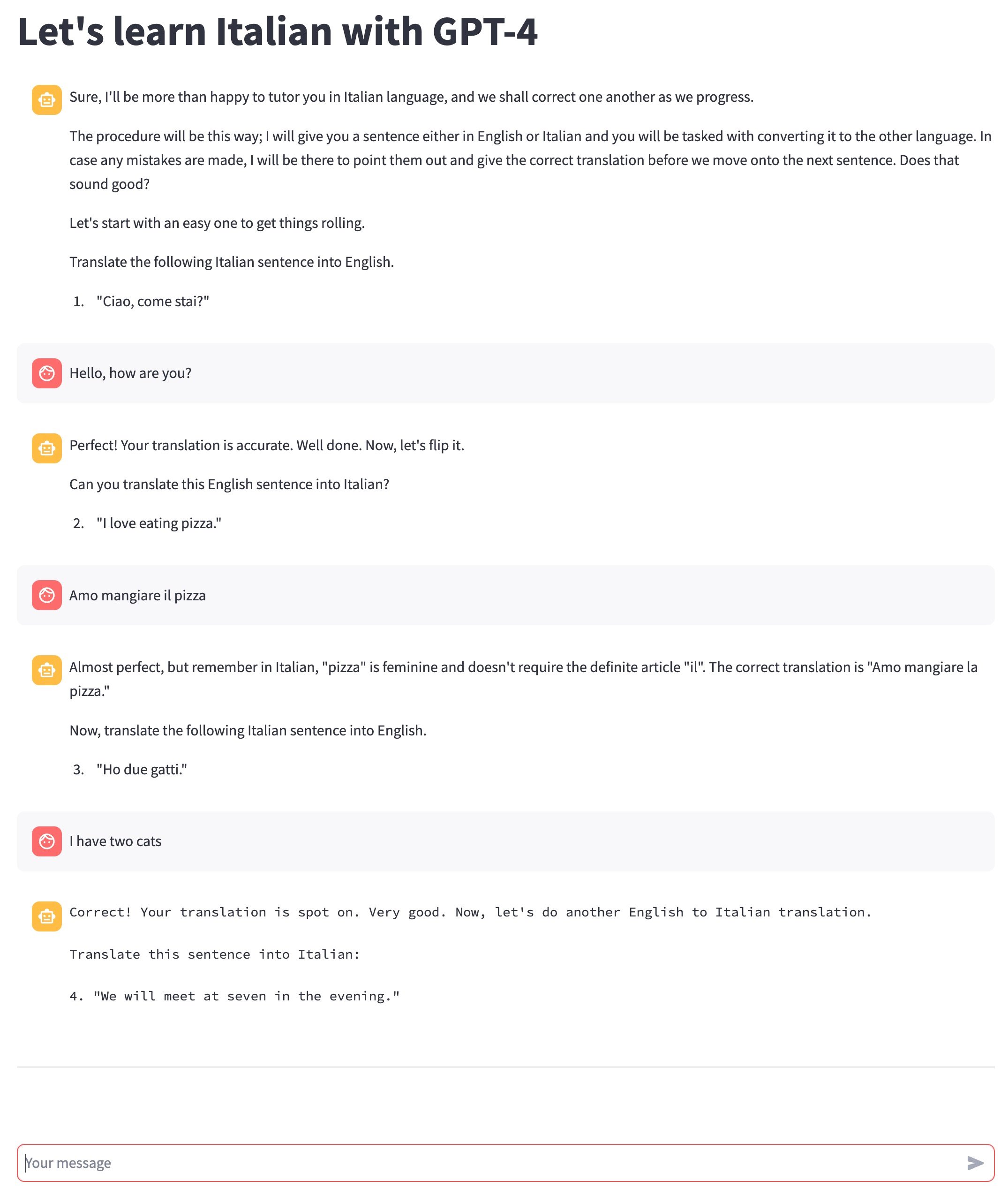

- “Italian Tutor”: Right here, the bot performs the position of an Italian tutor. It is going to current sentences that the consumer has to translate, alternating between English to Italian and Italian to English. If the consumer commits a mistake, the bot will right them and provides the suitable translation. It is designed for customers who want to observe their Italian language expertise in a conversational setup.

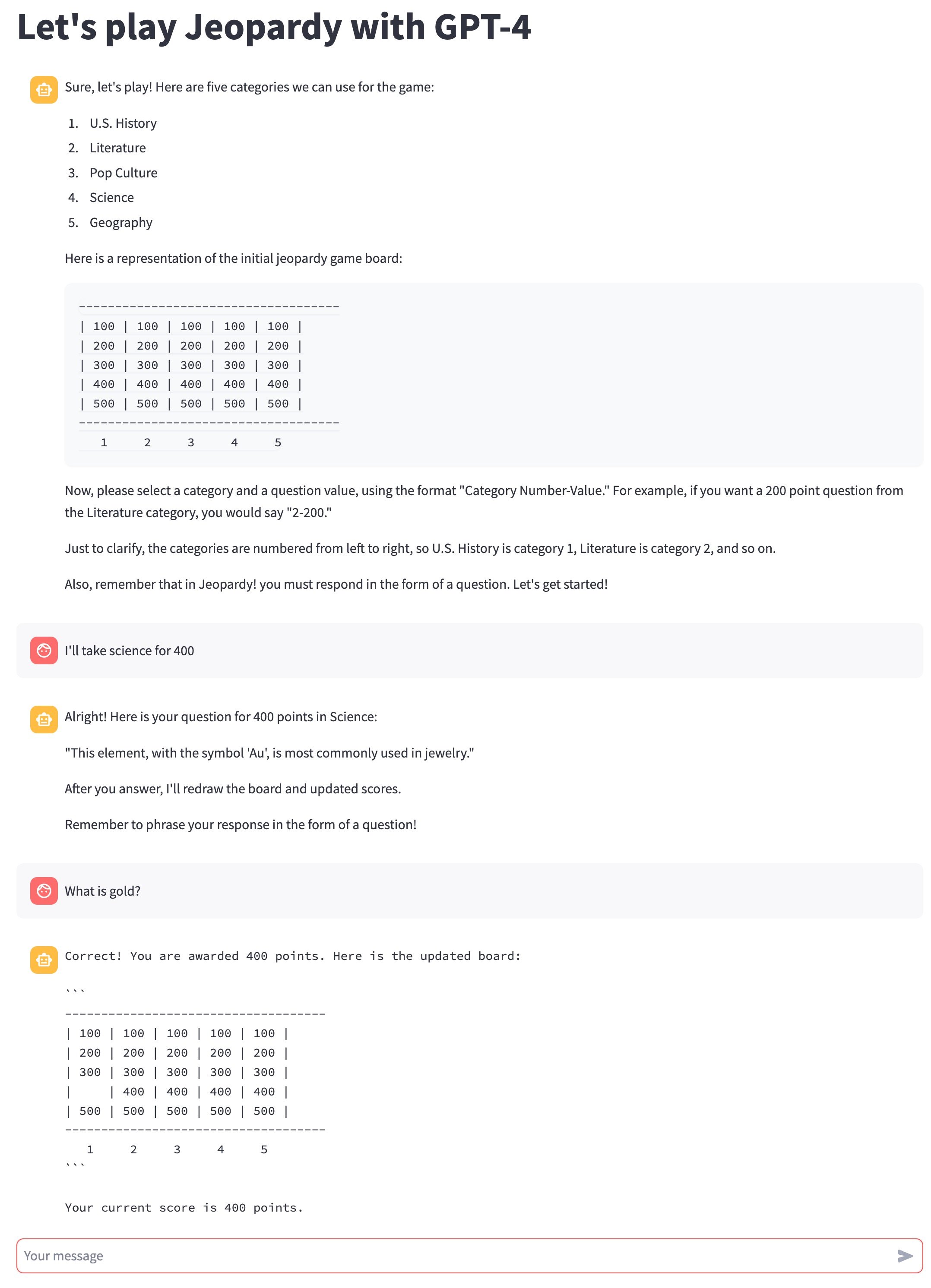

- “Jeopardy”: On this mode, the chatbot emulates a sport of ‘Jeopardy’ with the consumer. The bot will current a number of classes and an ASCII illustration of a sport board. Every class has 5 questions, every with values from 100 to 500. The consumer selects a class and the worth of a query, and the bot asks the corresponding query. The consumer solutions in Jeopardy’s signature model of a query. If the consumer will get it proper, they earn factors, and in the event that they get it improper, factors are deducted. The sport ends when all questions have been answered, and the bot studies the ultimate rating.

Fairly cool proper? All working the identical code! You may add new functions simply by including new, plain English choices to the prompts.py file, and experiment away!