Picture by Creator

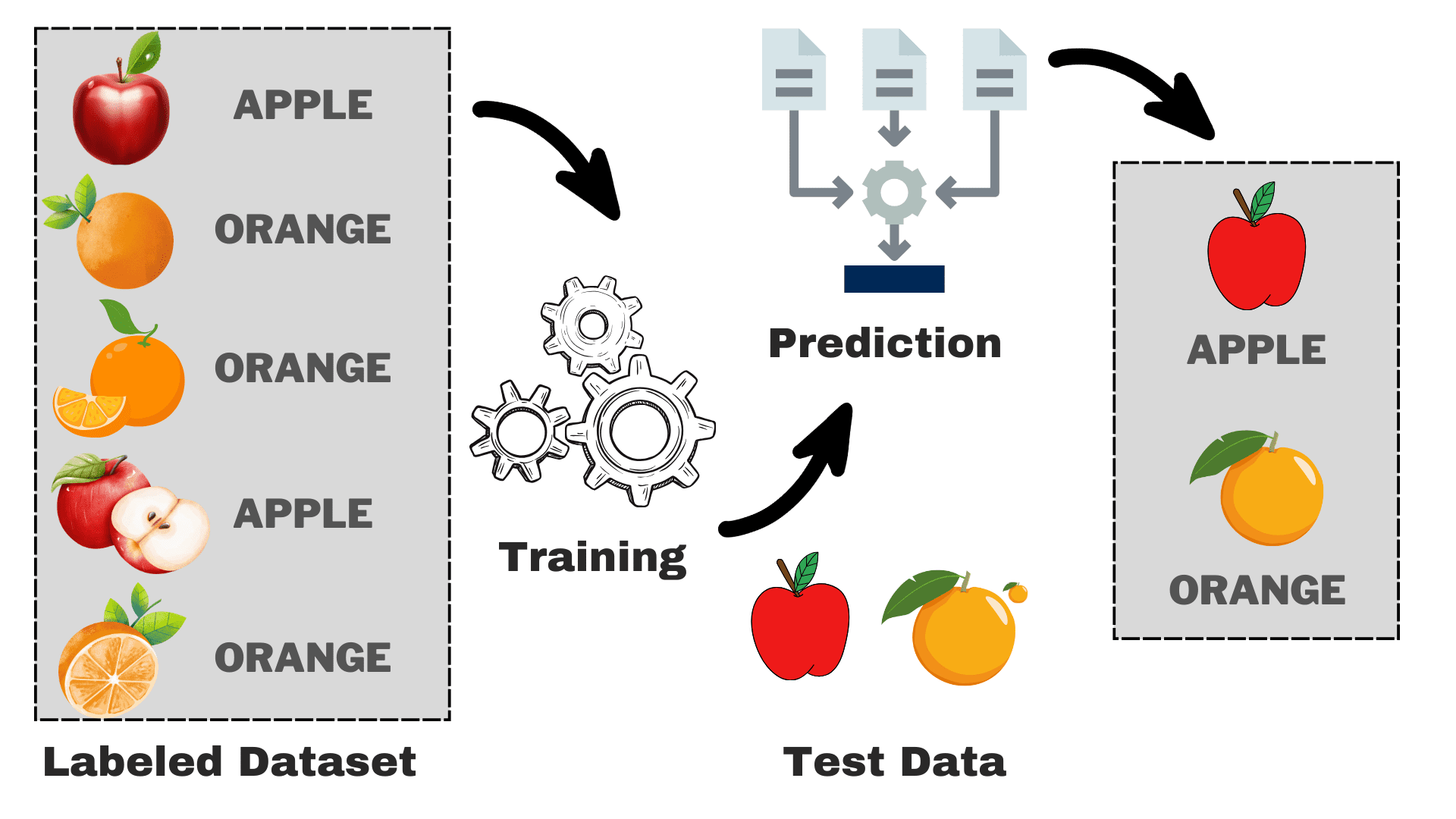

Supervised is a subcategory of machine studying wherein the pc learns from the labeled dataset containing each the enter in addition to the right output. It tries to seek out the mapping operate that relates the enter (x) to the output (y). You may consider it as instructing your youthful brother or sister the best way to acknowledge totally different animals. You’ll present them some photos (x) and inform them what every animal is named (y). After a sure time, they may study the variations and can be capable of acknowledge the brand new image accurately. That is the fundamental instinct behind supervised studying. Earlier than transferring ahead, let’s take a deeper have a look at its workings.

How Does Supervised Studying Work?

Picture by Creator

Suppose that you just need to construct a mannequin that may differentiate between apples and oranges primarily based on some traits. We are able to break down the method into the next duties:

- Information Assortment: Collect a dataset with photos of apples and oranges, and every picture is labeled as both “apple” or “orange.”

- Mannequin Choice: Now we have to choose the fitting classifier right here typically often known as the fitting supervised machine studying algorithm to your process. It is rather like choosing the right glasses that may enable you to see higher

- Coaching the Mannequin: Now, you feed the algorithm with the labeled pictures of apples and oranges. The algorithm seems at these photos and learns to acknowledge the variations, akin to the colour, form, and measurement of apples and oranges.

- Evaluating & Testing: To test in case your mannequin is working accurately, we are going to feed some unseen photos to it and evaluate the predictions with the precise one.

Supervised studying could be divided into two principal varieties:

Classification

In classification duties, the first goal is to assign knowledge factors to particular classes from a set of discrete courses. When there are solely two doable outcomes, akin to “sure” or “no,” “spam” or “not spam,” “accepted” or “rejected,” it’s known as binary classification. Nevertheless, when there are greater than two classes or courses concerned, like grading college students primarily based on their marks (e.g., A, B, C, D, F), it turns into an instance of a multi-classification downside.

Regression

For regression issues, you are attempting to foretell a steady numerical worth. For instance, you is perhaps keen on predicting your closing examination scores primarily based in your previous efficiency within the class. The expected scores can span any worth inside a particular vary, sometimes from 0 to 100 in our case.

Now, we’ve a primary understanding of the general course of. We’ll discover the favored supervised machine studying algorithms, their utilization, and the way they work:

1. Linear Regression

Because the title suggests, it’s used for regression duties like predicting inventory costs, forecasting the temperature, estimating the probability of illness development, and many others. We attempt to predict the goal (dependent variable) utilizing the set of labels (impartial variables). It assumes that we’ve a linear relationship between our enter options and the label. The central thought revolves round predicting the best-fit line for our knowledge factors by minimizing the error between our precise and predicted values. This line is represented by the equation:

The place,

- Y Predicted output.

- X = Enter characteristic or characteristic matrix in a number of linear regression

- b0 = Intercept (the place the road crosses the Y-axis).

- b1 = Slope or coefficient that determines the road’s steepness.

It estimates the slope of the road (weight) and its intercept(bias). This line can be utilized additional to make predictions. Though it’s the easiest and helpful mannequin for growing the baselines it’s extremely delicate to outliers that will affect the place of the road.

Gif on Primo.ai

2. Logistic Regression

Though it has regression in its title, however is basically used for binary classification issues. It predicts the likelihood of a constructive final result (dependent variable) which lies within the vary of 0 to 1. By setting a threshold (normally 0.5), we classify knowledge factors: these with a likelihood higher than the brink belongs to the constructive class, and vice versa. Logistic regression calculates this likelihood utilizing the sigmoid operate utilized to the linear mixture of the enter options which is specified as:

The place,

- P(Y=1) = Likelihood of the information level belonging to the constructive class

- X1 ,… ,Xn = Enter Options

- b0,….,bn = Enter weights that the algorithm learns throughout coaching

This sigmoid operate is within the type of S like curve that transforms any knowledge level to a likelihood rating throughout the vary of 0-1. You may see the beneath graph for a greater understanding.

Picture on Wikipedia

A better worth to 1 signifies the next confidence within the mannequin in its prediction. Identical to linear regression, it’s recognized for its simplicity however we can’t carry out the multi-class classification with out modification to the unique algorithm.

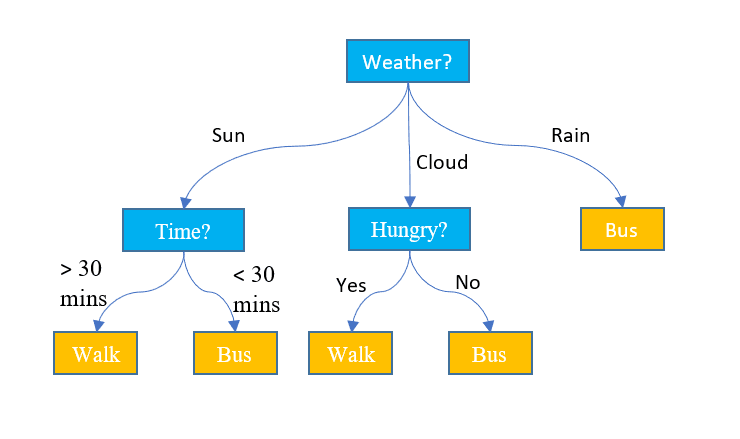

3. Resolution Timber

Not like the above two algorithms, choice bushes can be utilized for each classification and regression duties. It has a hierarchical construction identical to the flowcharts. At every node, a call concerning the path is made primarily based on some characteristic values. The method continues except we attain the final node that depicts the ultimate choice. Right here is a few primary terminology that you have to concentrate on:

- Root Node: The highest node containing the complete dataset is named the basis node. We then choose the very best characteristic utilizing some algorithm to separate the dataset into 2 or extra sub-trees.

- Inside Nodes: Every Inside node represents a particular characteristic and a call rule to resolve the following doable route for a knowledge level.

- Leaf Nodes: The ending nodes that characterize a category label are known as leaf nodes.

It predicts the continual numerical values for the regression duties. As the dimensions of the dataset grows, it captures the noise resulting in overfitting. This may be dealt with by pruning the choice tree. We take away branches that do not considerably enhance the accuracy of our choices. This helps preserve our tree centered on crucial components and prevents it from getting misplaced within the particulars.

Picture by Jake Hoare on Displayr

4. Random Forest

Random forest can be used for each the classification and the regression duties. It’s a group of choice bushes working collectively to make the ultimate prediction. You may consider it because the committee of consultants making a collective choice. Right here is the way it works:

- Information Sampling: As a substitute of taking the complete dataset directly, it takes the random samples by way of a course of known as bootstrapping or bagging.

- Function Choice: For every choice tree in a random forest, solely the random subset of options is taken into account for the decision-making as an alternative of the whole characteristic set.

- Voting: For classification, every choice tree within the random forest casts its vote and the category with the best votes is chosen. For regression, we common the values obtained from all bushes.

Though it reduces the impact of overfitting attributable to particular person choice bushes, however is computationally costly. One phrase that you’ll learn continuously within the literature is that the random forest is an ensemble studying methodology, which suggests it combines a number of fashions to enhance total efficiency.

5. Assist Vector Machines (SVM)

It’s primarily used for classification issues however can deal with regression duties as nicely. It tries to seek out the very best hyperplane that separates the distinct courses utilizing the statistical method, not like the probabilistic method of logistic regression. We are able to use the linear SVM for the linearly separable knowledge. Nevertheless, a lot of the real-world knowledge is non-linear and we use the kernel methods to separate the courses. Let’s dive deep into the way it works:

- Hyperplane Choice: In binary classification, SVM finds the very best hyperplane (2-D line) to separate the courses whereas maximizing the margin. Margin is the gap between the hyperplane and the closest knowledge factors to the hyperplane.

- Kernel Trick: For linearly inseparable knowledge, we make use of a kernel trick that maps the unique knowledge house right into a high-dimensional house the place they are often separated linearly. Frequent kernels embrace linear, polynomial, radial foundation operate (RBF), and sigmoid kernels.

- Margin Maximization: SVM additionally tries to enhance the generalization of the mannequin by rising the maximizing margin.

- Classification: As soon as the mannequin is educated, the predictions could be made primarily based on their place relative to the hyperplane.

SVM additionally has a parameter known as C that controls the trade-off between maximizing the margin and retaining the classification error to a minimal. Though they’ll deal with high-dimensional and non-linear knowledge nicely, selecting the best kernel and hyperparameter will not be as simple because it appears.

Picture on Javatpoint

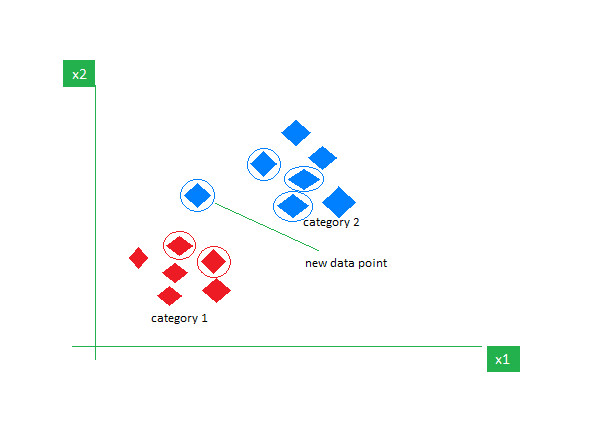

6. k-Nearest Neighbors (k-NN)

Ok-NN is the only supervised studying algorithm principally used for classification duties. It doesn’t make any assumptions concerning the knowledge and assigns the brand new knowledge level a class primarily based on its similarity with the prevailing ones. In the course of the coaching section, it retains the complete dataset as a reference level. It then calculates the gap between the brand new knowledge level and all the prevailing factors utilizing a distance metric (Eucilinedain distance e.g.). Based mostly on these distances, it identifies the Ok nearest neighbors to those knowledge factors. We then rely the prevalence of every class within the Ok nearest neighbors and assign probably the most continuously showing class as the ultimate prediction.

Picture on GeeksforGeeks

Selecting the best worth of Ok requires experimentation. Though it’s sturdy to noisy knowledge it’s not appropriate for prime dimensional datasets and has a excessive value related as a result of calculation of the gap from all knowledge factors.

As I conclude this text, I might encourage the readers to discover extra algorithms and attempt to implement them from scratch. It will strengthen your understanding of how issues are working beneath the hood. Listed here are some extra assets that will help you get began:

Kanwal Mehreen is an aspiring software program developer with a eager curiosity in knowledge science and purposes of AI in medication. Kanwal was chosen because the Google Technology Scholar 2022 for the APAC area. Kanwal likes to share technical information by writing articles on trending subjects, and is obsessed with enhancing the illustration of girls in tech trade.