Picture by Writer

In machine studying, unsupervised studying is a paradigm that includes coaching an algorithm on an unlabeled dataset. So there’s no supervision or labeled outputs.

In unsupervised studying, the purpose is to find patterns, buildings, or relationships inside the knowledge itself, moderately than predicting or classifying primarily based on labeled examples. It includes exploring the inherent construction of the information to achieve insights and make sense of advanced data.

This information will introduce you to unsupervised studying. We’ll begin by going over the variations between supervised and unsupervised studying—to put the bottom for the rest of the dialogue. We’ll then cowl the important thing unsupervised studying strategies and the favored algorithms inside them.

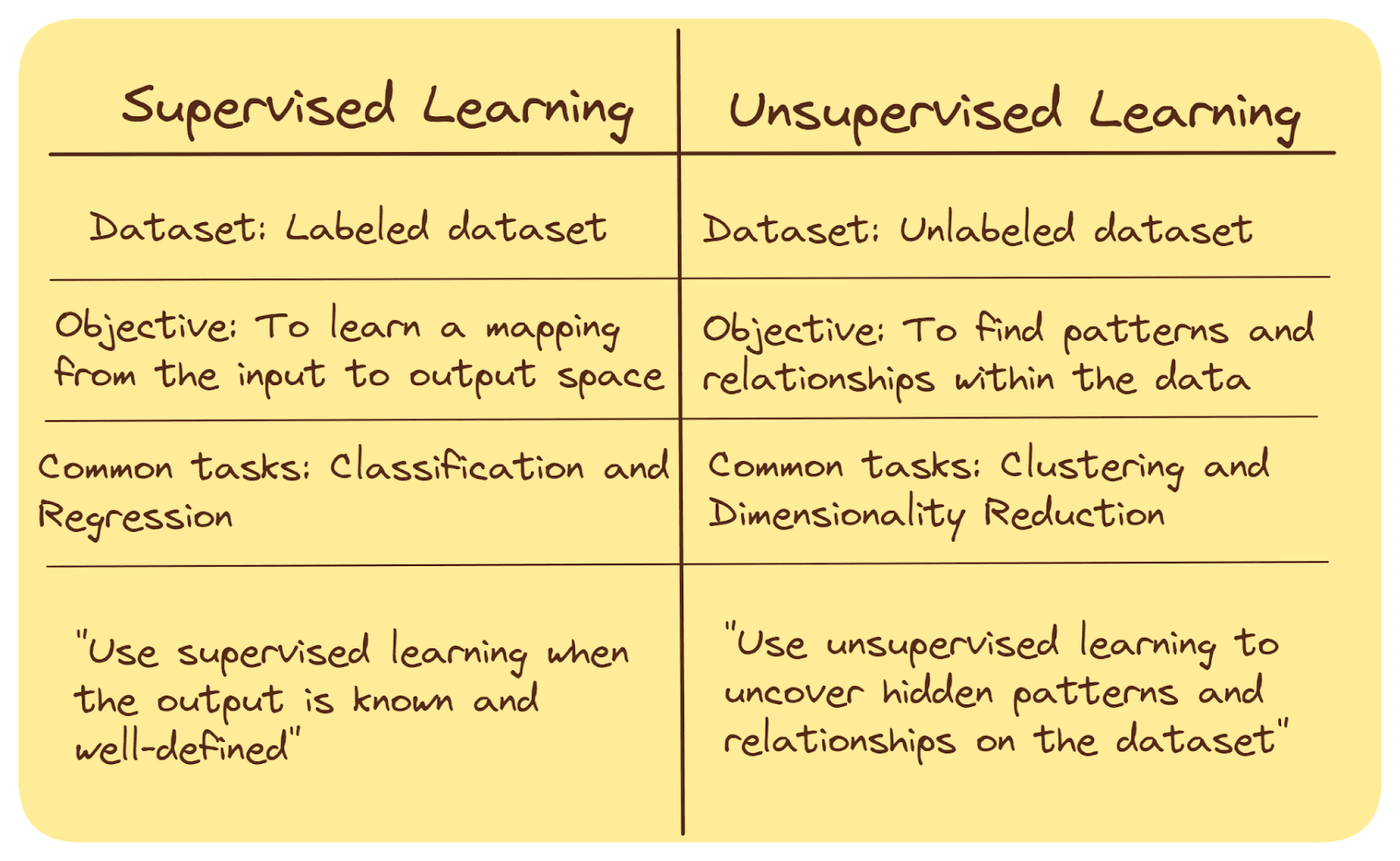

Supervised and unsupervised machine studying are two totally different approaches used within the discipline of synthetic intelligence and knowledge evaluation. Here is a quick abstract of their key variations:

Coaching Knowledge

In supervised studying, the algorithm is skilled on a labeled dataset, the place enter knowledge is paired with corresponding desired output (labels or goal values).

Unsupervised studying, then again, includes working with an unlabeled dataset, the place there are not any predefined output labels.

Goal

The purpose of supervised studying algorithms is to be taught a relationship—a mapping—from the enter to the output area. As soon as the mapping is discovered, we are able to use the mannequin to foretell the output values or class label for unseen knowledge factors.

In unsupervised studying, the purpose is to discover patterns, buildings, or relationships inside the knowledge, typically for clustering knowledge factors into teams, exploratory evaluation or characteristic extraction.

Frequent Duties

Classification (assigning a category label—one of many many predefined classes—to a beforehand unseen knowledge level) and regression (predicting steady values) are frequent duties in supervised studying.

Clustering (grouping related knowledge factors) and dimensionality discount (lowering the variety of options whereas preserving essential data) are frequent duties in unsupervised studying. We’ll focus on these in larger element shortly.

When To Use

Supervised studying is extensively used when the specified output is understood and well-defined, comparable to spam e-mail detection, picture classification, and medical prognosis.

Unsupervised studying is used when there’s restricted or no prior data concerning the knowledge and the target is to uncover hidden patterns or achieve insights from the information itself.

Right here’s a abstract of the variations:

Supervised vs. Unsupervised Studying | Picture by Writer

Summing up: Supervised studying focuses on studying from labeled knowledge to make predictions or classifications, whereas unsupervised studying seeks to find patterns and relationships inside unlabeled knowledge. Each approaches have their very own functions—primarily based on the character of the information and the issue at hand.

As mentioned, in unsupervised studying, we now have the enter knowledge and are tasked with discovering significant patterns or representations inside that knowledge. Unsupervised studying algorithms achieve this by figuring out similarities, variations, and relationships among the many knowledge factors with out being supplied with predefined classes or labels.

For this dialogue, we’ll go over the 2 essential unsupervised studying strategies:

- Clustering

- Dimensionality Discount

What Is Clustering?

Clustering includes grouping related knowledge factors collectively into clusters primarily based on some similarity measure. The algorithm goals to search out pure teams or classes inside the knowledge the place knowledge factors in the identical cluster are extra related to one another than to these in different clusters.

As soon as we now have the dataset grouped into totally different clusters we are able to basically label them. And if wanted, we are able to carry out supervised studying on the clustered dataset.

What Is Dimensionality Discount?

Dimensionality discount refers to strategies that cut back the variety of options—dimensions—within the knowledge whereas preserving essential data. Excessive-dimensional knowledge might be advanced and troublesome to work with, so dimensionality discount helps in simplifying the information for evaluation.

Each clustering and dimensionality discount are highly effective strategies in unsupervised studying, offering priceless insights and simplifying advanced knowledge for additional evaluation or modeling.

Within the the rest of the article, let’s evaluation essential clustering and dimensionality discount algorithms.

As mentioned, clustering is a elementary method in unsupervised studying that includes grouping related knowledge factors collectively into clusters, the place knowledge factors inside the identical cluster are extra related to one another than to these in different clusters. Clustering helps establish pure divisions inside the knowledge, which may present insights into patterns and relationships.

There are numerous algorithms used for clustering, every with its personal strategy and traits:

Ok-Means Clustering

Ok-Means clustering is an easy, sturdy, and generally used algorithm. It partitions the information right into a predefined variety of clusters (Ok) by iteratively updating cluster centroids primarily based on the imply of information factors inside every cluster.

It iteratively refines cluster assignments till convergence.

Right here’s how the Ok-Means clustering algorithm works:

- Initialize Ok cluster centroids.

- Assign every knowledge level—primarily based on the chosen distance metric—to the closest cluster centroid.

- Replace centroids by computing the imply of information factors in every cluster.

- Repeat steps 2 and three till convergence or an outlined variety of iterations.

Hierarchical Clustering

Hierarchical clustering creates a tree-like construction—a dendrogram—of information factors, capturing similarities at a number of ranges of granularity. Agglomerative clustering is essentially the most generally used hierarchical clustering algorithm. It begins with particular person knowledge factors as separate clusters and progressively merges them primarily based on a linkage criterion, comparable to distance or similarity.

Right here’s how the agglomerative clustering algorithm works:

- Begin with `n` clusters: every knowledge level as its personal cluster.

- Merge closest knowledge factors/clusters into a bigger cluster.

- Repeat 2. till a single cluster stays or an outlined variety of clusters is reached.

- The consequence might be interpreted with the assistance of a dendrogram.

Density-Based mostly Spatial Clustering of Purposes with Noise (DBSCAN)

DBSCAN identifies clusters primarily based on the density of information factors in a neighborhood. It could possibly discover arbitrarily formed clusters and may establish noise factors and detect outliers.

The algorithm includes the next (simplified to incorporate the important thing steps):

- Choose a knowledge level and discover its neighbors inside a specified radius.

- If the purpose has adequate neighbors, broaden the cluster by together with the neighbors of its neighbors.

- Repeat for all factors, forming clusters related by density.

Dimensionality discount is the method of lowering the variety of options (dimensions) in a dataset whereas retaining important data. Excessive-dimensional knowledge might be advanced, computationally costly, and is liable to overfitting. Dimensionality discount algorithms assist simplify knowledge illustration and visualization.

Principal Element Evaluation (PCA)

Principal Element Evaluation—or PCA—transforms knowledge into a brand new coordinate system to maximise variance alongside the principal elements. It reduces knowledge dimensions whereas preserving as a lot variance as doable.

Right here’s how one can carry out PCA for dimensionality discount:

- Compute the covariance matrix of the enter knowledge.

- Carry out eigenvalue decomposition on the covariance matrix. Compute the eigenvectors and eigenvalues of the covariance matrix.

- Type eigenvectors by eigenvalues in descending order.

- Undertaking knowledge onto the eigenvectors to create a lower-dimensional illustration.

t-Distributed Stochastic Neighbor Embedding (t-SNE)

The primary time I used t-SNE was to visualise phrase embeddings. t-SNE is used for visualization by lowering high-dimensional knowledge to a lower-dimensional illustration whereas sustaining native pairwise similarities.

Here is how t-SNE works:

- Assemble likelihood distributions to measure pairwise similarities between knowledge factors in high-dimensional and low-dimensional areas.

- Decrease the divergence between these distributions utilizing gradient descent. Iteratively transfer knowledge factors within the lower-dimensional area, adjusting their positions to attenuate the associated fee operate.

As well as, there are deep studying architectures comparable to autoencoders that can be utilized for dimensionality discount. Autoencoders are neural networks designed to encode after which decode knowledge, successfully studying a compressed illustration of the enter knowledge.

Let’s discover some functions of unsupervised studying. Listed here are some examples:

Buyer Segmentation

In advertising and marketing, companies use unsupervised studying to phase their buyer base into teams with related behaviors and preferences. This helps tailor advertising and marketing methods, campaigns, and product choices. For instance, retailers categorize prospects into teams comparable to “funds customers,” “luxurious patrons,” and “occasional purchasers.”

Doc Clustering

You may run a clustering algorithm on a corpus of paperwork. This helps group related paperwork collectively, aiding in doc group, search, and retrieval.

Anomaly Detection

Unsupervised studying can be utilized to establish uncommon and strange patterns—anomalies—in knowledge. Anomaly detection has functions in fraud detection and community safety to detect uncommon—anomalous—conduct. Detecting fraudulent bank card transactions by figuring out uncommon spending patterns is a sensible instance.

Picture Compression

Clustering can be utilized for picture compression to remodel pictures from high-dimensional coloration area to a a lot decrease dimensional coloration area. This reduces picture storage and transmission measurement by representing related pixel areas with a single centroid.

Social Community Evaluation

You may analyze social community knowledge—primarily based on consumer interactions—to uncover communities, influencers, and patterns of interplay.

Subject Modeling

In pure language processing, the duty of matter modeling is used to extract subjects from a set of textual content paperwork. This helps categorize and perceive the primary themes—subjects—inside a big textual content corpus.

Say, we now have a corpus of reports articles and we don’t have the paperwork and their corresponding classes beforehand. So we are able to carry out matter modeling on the gathering of reports articles to establish subjects comparable to politics, know-how, and leisure.

Genomic Knowledge Evaluation

Unsupervised studying additionally has functions in biomedical and genomic knowledge evaluation. Examples embrace clustering genes primarily based on their expression patterns to find potential associations with particular ailments.

I hope this text helped you perceive the fundamentals of unsupervised studying. The following time you’re employed with a real-world dataset, attempt to determine the educational downside at hand. And attempt to assess if it may be modeled as a supervised or an unsupervised studying downside.

In the event you’re working with a dataset with high-dimensional options, attempt to apply dimensionality discount earlier than constructing the machine studying mannequin. Continue to learn!

Bala Priya C is a developer and technical author from India. She likes working on the intersection of math, programming, knowledge science, and content material creation. Her areas of curiosity and experience embrace DevOps, knowledge science, and pure language processing. She enjoys studying, writing, coding, and low! Presently, she’s engaged on studying and sharing her data with the developer group by authoring tutorials, how-to guides, opinion items, and extra.